Imagine a world where dialogue between humans and non-human animals is possible—a future where we could understand what whales are saying. Long considered the product of science fiction, whale communication has become a thriving field of research. With the advent of artificial intelligence, research teams around the world are diving into the mythical soundscape of cetaceans to uncover the hidden patterns of their language.

A unique interaction

On December 12, 2023, in southeastern Alaska, an historic exchange took place between scientists from the Whale-SETI team and a 40-year-old adult humpback whale nicknamed Twain. The research team had emitted a multitude of sounds that day through an underwater speaker, but failed to receive a response.

When the team played a recording of a contact call captured the previous day in the same population, they witnessed an extraordinary event: A whale appeared to respond! Twain had apparently left her pod to approach and circle the boat—initiating a sort of exchange. To maintain her attention, the researchers adapted to her latency periods. In other words, if the whale took 10 seconds to respond, the team would do the same. The exchange lasted 20 minutes, during which the whale participated verbally and physically in three phases of interaction.

Composed of members of the SETI Institute and the Alaska Whale Foundation, the Whale-SETI team has been studying humpback whale communication systems in an effort to develop intelligence filters for the search for extraterrestrial intelligence. The team hypothesizes that whale communication reveals complex meaning and an intelligence that may even be on par with human language. With the help of artificial intelligence (AI), the Whale-SETI team aims to measure the complexity of humpback whale communication and understand the rules and structures of the animals’ messages.

Project CETI

More than 8,000 km away, a multidisciplinary team based in Dominica listens to and translates sperm whale vocalizations using advanced machine learning and robotics.

By analyzing a data set accumulated over more than a decade and covering nearly 9,000 codas (article in French) from the Eastern Caribbean sperm whale clan, the Project CETI team discovered in 2024 that sperm whales may actually possess a phonetic alphabet. These toothed whales communicate using codas—short bursts of clicks interspersed with silences of variable duration. These series of clicks can be combined to form complex sequences similar to Morse code.

The study illustrates that their codas form a communication system that blends rubato and ornamentation with rhythm and tempo. In other words, their click sequences have a basic, invariable pattern representing rhythm and tempo. These patterns are modified by the addition of clicks, known as ornamentation, and by slight variations in the timing between those clicks, known as rubato, explains Dr. Daniela Rus, director of the MIT Computer Science and Artificial Intelligence Laboratory and member of CETI’s machine learning and robotics teams. “While the communicative function of many codas remains an open question, our results show that the sperm whale communication system is, in principle, capable of representing a large space of possible meanings,” point out the authors of the study. In other words, even if the meaning of the codas remains unclear, the possibilities are vast.

Crucial research on the horizon

Not far away, off the coast of the Bahamas, the Wild Dolphin Project (WDP) is conducting the world’s longest-running underwater dolphin research using a non-invasive approach. The team studies the Atlantic spotted dolphin community in its natural habitat. One of its goals is to observe and analyze communication and interactions in an attempt to establish links between specific sounds and behaviours. The scientists hope to further their understanding of the structure and potential meaning of these sound sequences. This colossal undertaking will be supported by artificial intelligence.

The WDP is beginning fieldwork this season with DolphinGemma, a fundamental AI model that operates by means of audio input and output. Developed by Google, it was trained primarily on the WDP’s acoustic database of Atlantic spotted dolphins. A fundamental model is a large model trained on massive data sets that subsequently serves as a basis for performing various specialized tasks. In the case of audio input and output, the model directly receives vocalizations as input and generates new sound sequences (as opposed to text or images) as output. This model aims to understand how vocalizations are structured. By relying on prediction mechanisms similar to those of human language models, it anticipates subsequent sound sequences and generates new, similar sounds. Thus, the tool will help scientists pick out recurring sound patterns and potentially discover structures and meanings in the way dolphins naturally communicate.

Chatting with dolphins

WDP is also pursuing a two-way interaction project (article in French) between dolphins and humans to develop a shared vocabulary using an underwater computer called CHAT (Cetacean Hearing and Telemetry), which was designed in partnership with the Georgia Institute of Technology. The goal is to create an association between new, CHAT-generated artificial whistles outside the natural repertoire and objects that dolphins enjoy, such as Sargassum seaweeds, which they use for play. The research team hopes that the naturally curious dolphins will learn to mimic these whistles in order to request these items. The goal of this technology is to explore the potential for two-way interaction, better understand dolphin communication, and create shared linguistic references.

To facilitate this interaction, the CHAT computer is worn by divers. It receives sounds via two hydrophones and reproduces them using an underwater speaker; it has a library of synthetic sounds that it can recognize. When the computer emits a whistle triggered by a diver, it also emits a “translation” of its meaning through his or her headset. Likewise, when the computer recognizes one of the artificial whistles produced by a dolphin, this is also translated directly to the diver. Thus, when a dolphin communicates with a whistle from CHAT’s sound library, researchers can quickly understand and respond by offering the correct object and thereby reinforce the association. For example, if the computer recognizes the hissing sound associated with Sargassum (the seaweed used in the game), it will transmit it to the diver, who will then show the corresponding algae to the dolphin.

Ethical and legal implications

Understanding whales through non-human animal communication technologies (NACTs) could revolutionize our relationship with living beings. Translating their communication could foster curiosity and empathy toward cetaceans and other animals. It could also prevent harm caused by human activities and allow for better tailored protection measures by providing a deeper understanding of the impact of our societies on their lives. It could support and strengthen legal battles for animal rights by providing concrete evidence of their sentience—in other words, the capacity of a living being to feel emotions, perceive its environment, and experience subjective sensations such as pain and pleasure.

However, these technologies are not without risks and do not guarantee a better world for all living beings. While these advances are attracting attention and funding from all directions, these risks can be exacerbated by pressure from external actors and the monetization of data.

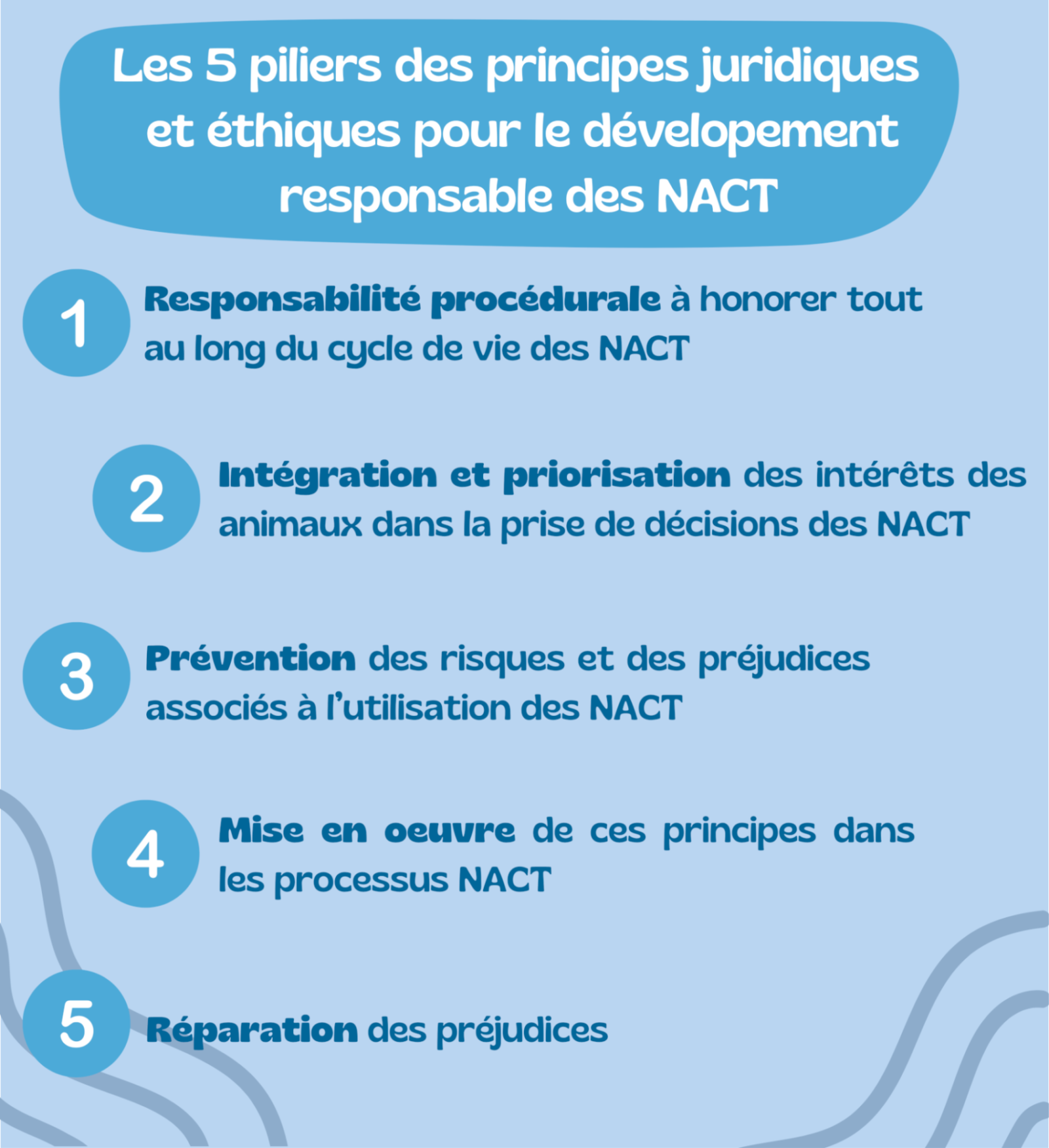

Currently, notwithstanding Institutional Animal Care and Use Committees, there are no laws governing communication technologies for non-human animals. The More-Than-Human-Life (MOTH) program at New York University’s School of Law has sought to fill this gap by undertaking a research project to develop a set of legal and ethical principles for the responsible development of these technologies. These principles are based on a foundation of fundamental values stipulating that non-human animals are subjects, not objects. These technologies should be used to create a bond of kinship with living beings, not as a tool for dominating them. These principles are based on five pillars. This report is due to be published shortly and is open for approval and adaptation by stakeholders in these technologies, with the hope of minimizing the risks of such technologies and creating a world in which whales—and other non-human animals—have a voice. Let’s hope we will have the sensitivity and compassion to listen.